Airflow Management A Beginners Guide

Orchestrating data pipelines efficiently, ensuring reliability and scalability, crucial for workflow automation and data processing.

What is Airflow Management?

Airflow Management involves the techniques used to optimize the flow of air through a system, ensuring that it's efficient and meets the needs of various operations. A well-executed airflow management strategy not only enhances performance but also leads to reduced energy consumption in data centers. This concept closely ties into various operational areas including ETL, where efficient data processing relies on effective airflow systems.

The Role of Airflow Management in Data Pipelines

In the world of data pipelines, airflow can have a significant impact on how quickly and efficiently data is processed. With proper management, we reduce latency and improve the speed of data movement from source to destination. It ensures that systems like Apache Airflow can help orchestrate complex workflows while accommodating various dependencies between tasks.

Understanding Workflow Orchestration

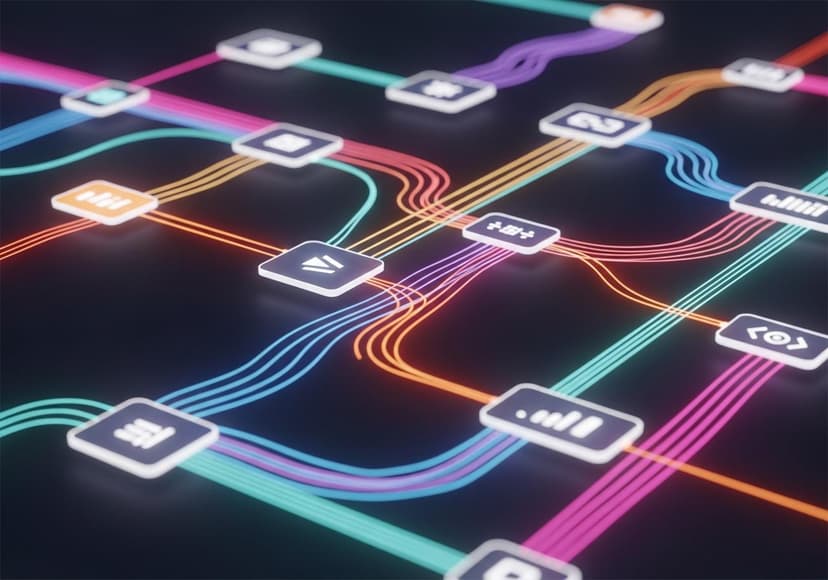

Workflow orchestration within Airflow uses directed acyclic graphs (DAGs) to depict the flow of tasks and their dependencies. Each task is represented by an operator, which defines what needs to be done, whether it's loading data or running a transformation. This orchestration allows for efficient scheduling and execution of tasks, enhancing overall workflow management.

Task Scheduling and Dependencies

Task scheduling in Apache Airflow plays a crucial role in managing resources effectively. Understanding dependencies helps you arrange your tasks in such a way that everything runs smoothly without deadlock situations. This means that tasks are run in the right order, preventing data loss or processing errors. With an optimized task schedule, your ETL processes become highly effective and reliable.

DAGs: The Backbone of Airflow Management

The concept of DAGs is pivotal in Airflow management. A DAG is essentially a declaration of tasks and their relationships, representing the data pipeline visually. Understanding how to construct a proper DAG will greatly influence your efficiency in managing workflow orchestrations. It allows teams to see dependencies and adjust accordingly if things aren’t flowing smoothly.

Operators: The Building Blocks of Workflows

Within Airflow, operators are the fundamental components that create tasks. They tell Airflow how to execute a particular operation. For instance, you may employ a PythonOperator to run a Python function, or a BashOperator to execute a bash command. Selecting the right operators based on your ETL requirements is crucial for achieving successful data transformations and workflows.

Airflow UI: Your Control Center

The Airflow UI serves as an essential tool, providing a visual interface to monitor your workflows, DAGs, and tasks. From here, you can get detailed insights into the status of processes, troubleshoot if needed, and even manually trigger tasks if necessary. Gaining familiarity with the Airflow UI will empower you to manage your workflows better and respond to any needs more dynamically.

Monitoring and Alerts

Proper monitoring of your workflows is vital. Airflow provides you with tools and settings to set up alerts in case something goes wrong. Whether it’s an execution failure or a task timeout, being promptly alerted allows you to address issues without significant delays. Adding robust monitoring can mean the difference between a smoothly running system and hours of data loss.

Backfilling: Playing Catch-Up

Sometimes, tasks may need to be re-run or executed for past dates, a process known as backfilling. With Airflow, you can easily run past tasks even if they were missed initially. This feature is particularly useful for maintaining data integrity and ensuring that historical data isn’t left uncovered during your ETL process. Remember, the past data can hold tremendous value for insights and decision-making!

Enhancing Efficiency with Airflow Management

Ultimately, mastering airflow management will lead to an enhanced capacity to manage data pipelines efficiently. By understanding the principles of workflow orchestration, task scheduling, dependencies, DAGs, and operators, you’ll be able to streamline your ETL processes significantly. Not only does this improve operational efficiency, but it also cuts down on energy waste as seen in various energy-saving strategies.

For more in-depth knowledge and advanced strategies in airflow management, consider browsing through navigating airflow management systems.

Related Posts

10 Stylish Kitchen Designs

Explore modern kitchen designs with innovative layouts, stylish storage, and functional features for culinary creativity.

3D Remodeling Tools An Overview

Tools facilitate creating, modifying, and optimizing 3D models across industries, encompassing various applications and workflows.

A Short Guide To Selling Your Home Without An Agent

Learn to sell your home independently by understanding steps, marketing, and legal requirements for success.